RSNA 2019: AI as a Peer-Review Tool

Peer review is a viable application for artificial intelligence (AI) software that identifies abnormalities on X-rays, say radiologists from New Delhi who described this application at the 2019 RSNA annual meeting. The researchers conducted a quality assurance study evaluating commercial AI software designed to detect abnormalities in chest radiographs.

Noting that quality control in radiology is usually performed by random double reads, the RADPEER approach, or collating information about clinical correlation session presenter Vasanthakumar K Venugopal, MBBS, MD, said, “Numerous studies have shown that these methods are not optimized to discover cases with diagnostic error. Thus, they have inherent limitations to improve quality. Non-random peer review processes are time consuming and not exhaustive. My colleagues and I wanted to determine if an AI tool could help or improve the peer review process,” added Dr. Venugopal, of the Center for Advanced Research in Imaging, Neuroscience and Genomics of Mahajan Imaging.

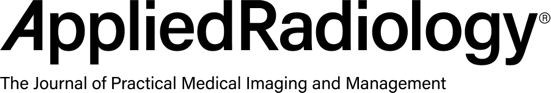

Calcification/Nodule: A tiny calcified nodule is present in the right

lower lung zone. Image courtesy of Mahajan Imaging/Lunit.

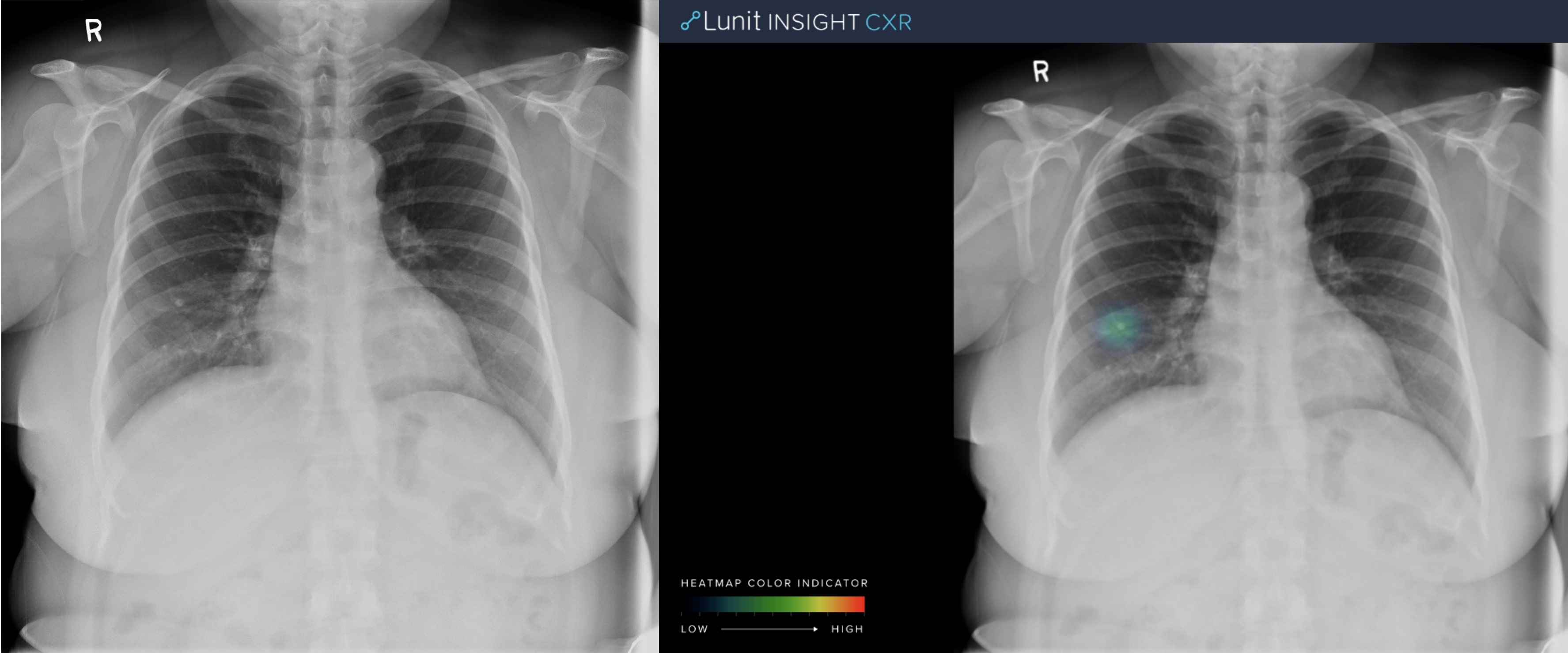

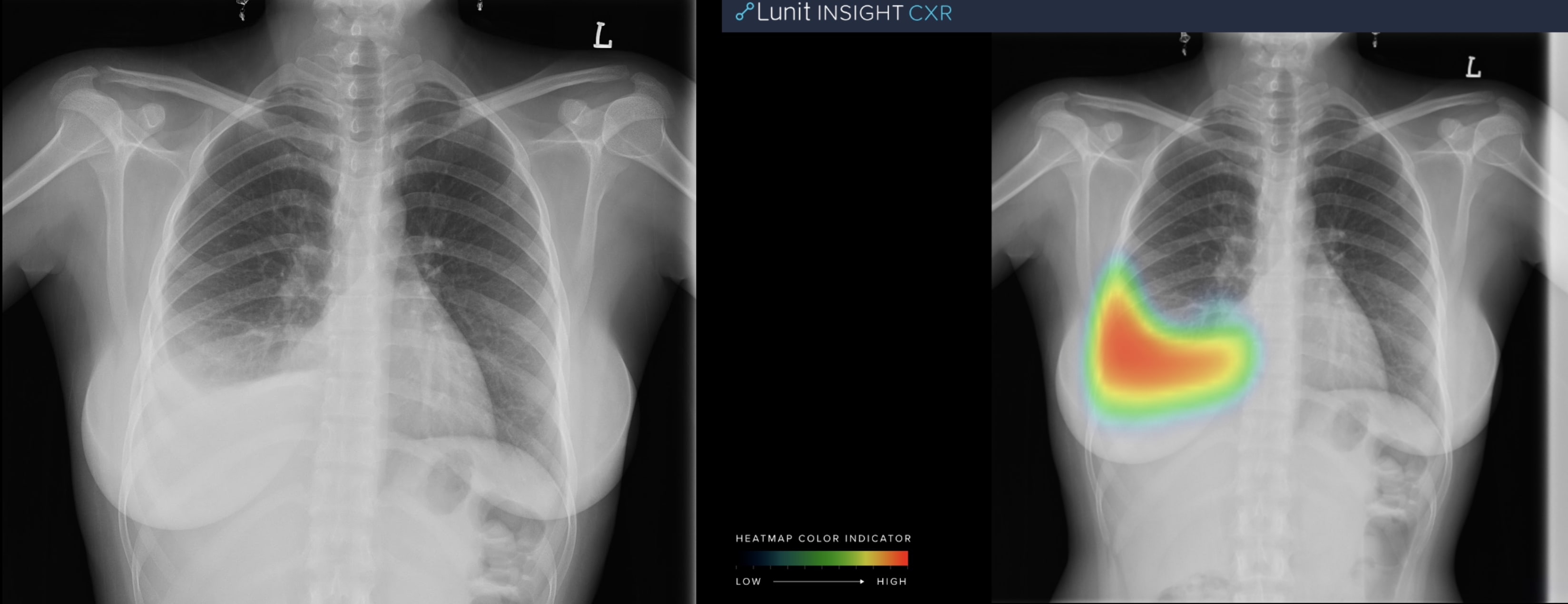

Cardiomegaly: Mild cardiomegaly is present.

Image courtesy of Mahajan Imaging/Lunit.

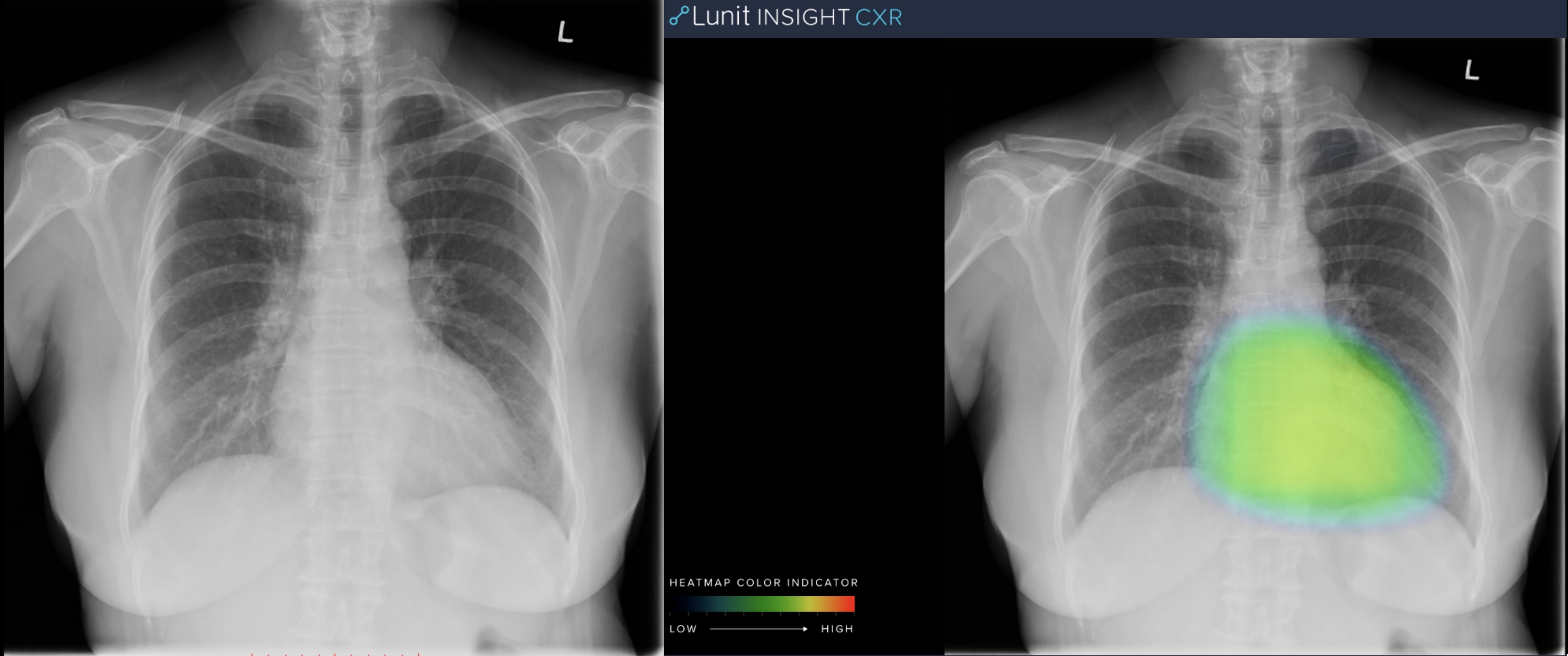

Consolidation: Patchy consolidation is seen in the right upper lobe.

Image courtesy of Mahajan Imaging/Lunit.

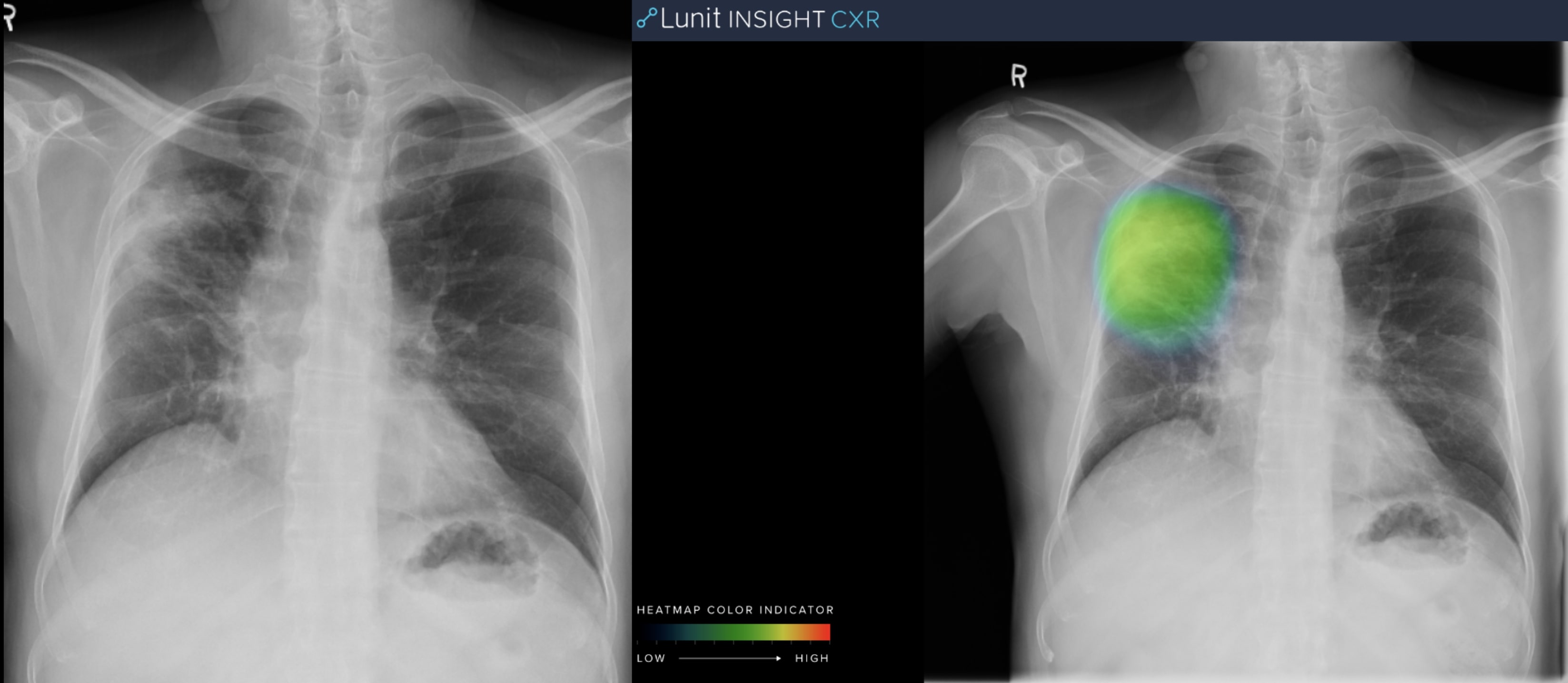

Pleural Effusion: Pleural effusion is present in right pleural cavity.

Image courtesy of Mahajan Imaging/Lunit.

The team used a commercial deep-learning algorithm for second reads to assess AI quality assessment of radiology reports. Their objectives were to measure the frequency of errors and to assess overall impact of AI on the QA process.

Data of adult chest radiographs performed in January 2019 at four outpatient imaging departments were pooled. At each site, the radiology workflow included the additional step of applying a “normal” or “abnormal” label to the exams.

All radiographs marked normal were prospectively analyzed by a deep-learning algorithm (LUNIT Insight, Seoul, South Korea) designed to detect 10 of the most common findings on chest radiographs. The algorithm creates heat maps of detected lesions, scores the probability of abnormality, and creates case report summarizing results by finding.

The algorithm, Dr. Venugopal said, was trained on more than 200,000 cases clinically proven on CT. In prior research, the algorithm performed at a standalone accuracy of 98% to 99%. None of the chest radiographs in these validation studies had been performed in India.

Tuned for automated normal versus abnormal classification, the algorithm estimated an abnormality score of 0.00 to 1.00, with a fixed operating threshold of 0.15 in high-sensitivity testing. A subspecialty chest radiologist reviewed images flagged by the algorithm, as well as the original reports. Confirmed critical errors were reported to the quality control team for corrective action and feedback to the reporting radiologists.

The algorithm flagged 46 abnormal chest radiographs out of 708, or 6.5%, that had been reported as normal. Twelve radiographs were confirmed by the radiologist as abnormal. These included four with lung nodules, three with significant blunting of costophrenic angles or effusions, two with apical fibrosis, two with cardiomegaly, and one with consolidation.

“AI algorithms can quickly parse through large datasets to help identify errors made by radiologists in a random peer review process,” said Dr. Venugopal. “This could enable a second read of the bulk of studies … “There is a clear, measurable positive impact for radiologists and there is no risk from AI judgments to patients,” he added

REFERENCE

- Mahajan V, Venugopal VK, Gupta S, et al. Deploying deep learning for quality control: An AI-assisted review of chest X-rays reported as normal in routine clinical practice. Radiological Society of North America 2019 Scientific Assembly and Annual Meeting, December 1-6, 2019, Chicago, IL. archive.rsna.org/2019/19008598.html